multithreading

What is multithreading?

Multithreading is the ability of a program or an operating system to enable more than one user at a time without requiring multiple copies of the program running on the computer. Multithreading can also handle multiple requests from the same user.

Each user request for a program or system service is tracked as a thread with a separate identity. As programs work on behalf of the initial thread request and are interrupted by other requests, the work status of the initial request is tracked until the work is completed. In this context, a user can also be another program.

Fast central processing unit (CPU) speed and large memory capacities are needed for multithreading. The single processor executes pieces, or threads, of various programs so fast, it appears the computer is handling multiple requests simultaneously.

How does multithreading work?

The extremely fast processing speeds of today's microprocessors make multithreading possible. Even though the processor executes only one instruction at a time, threads from multiple programs are executed so fast that it appears multiple programs are executing concurrently.

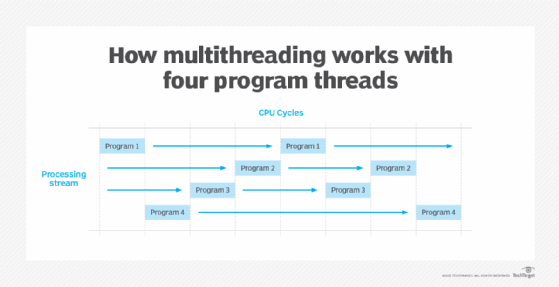

Each CPU cycle executes a single thread that links to all other threads in its stream. This synchronization process occurs so quickly that it appears all the streams are executing at the same time. This can be described as a multithreaded program, as it can execute many threads while processing.

Each thread contains information about how it relates to the overall program. While in the asynchronous processing stream, some threads are executed while others wait for their turn. Multithreading requires programmers to pay careful attention to prevent race conditions and deadlock.

An example of multithreading

Multithreading is used in many different contexts. One example occurs when data is entered into a spreadsheet and used for a real-time application.

When working on a spreadsheet, a user enters data into a cell, and the following may happen:

- column widths may be adjusted;

- repeating cell elements may be replicated; and

- spreadsheets may be saved multiple times as the file is further developed.

Each activity occurs because multiple threads are generated and processed for each activity without slowing down the overall spreadsheet application operation.

Multithreading vs. multitasking vs. multiprocessing

Multithreading differs from Multitasking and multiprocessing. However, multitasking and multiprocessing are related to multithreading in the following ways:

- Multitasking is a computer's ability to execute two or more concurrent programs. Multithreading makes multitasking possible when it breaks programs into smaller, executable threads. Each thread has the programming elements needed to execute the main program, and the computer executes each thread one at a time.

- Multiprocessing uses more than one CPU to speed up overall processing and supports multitasking.

Multithreading vs. parallel processing and multicore processors

Parallel processing is when two or more CPUs are used to handle separate parts of a task. Multiple tasks can be executed concurrently in a parallel processing system. This differs from using a single processor where only one thread is executed at a time, and the tasks that make up a thread are scheduled sequentially.

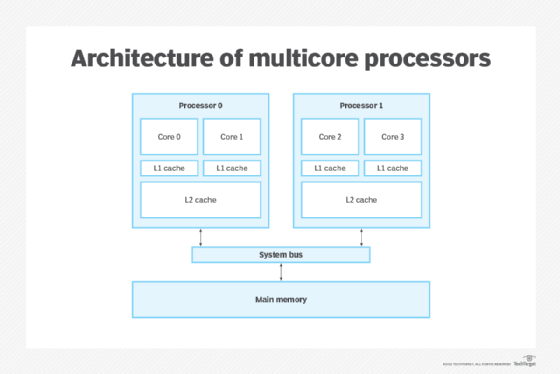

Multicore processors on a CPU motherboard have more than one independent processing unit, or core. They differ from single-core CPUs, which have only one processing unit. Multicore CPUs provide increased speed and responsiveness compared to single-core processors.

Multicore processors can execute in parallel as many threads as there are CPU cores. This means parts of a task are completed faster. On a single core system, the threads of a multithreaded applications don't execute in parallel. Instead, they share a single processor core.

Learn about Clojure programming language -- a dialect of Lisp -- and its Java multithreading uses. It combines accessibility and simplicity with a multithreaded programming infrastructure.